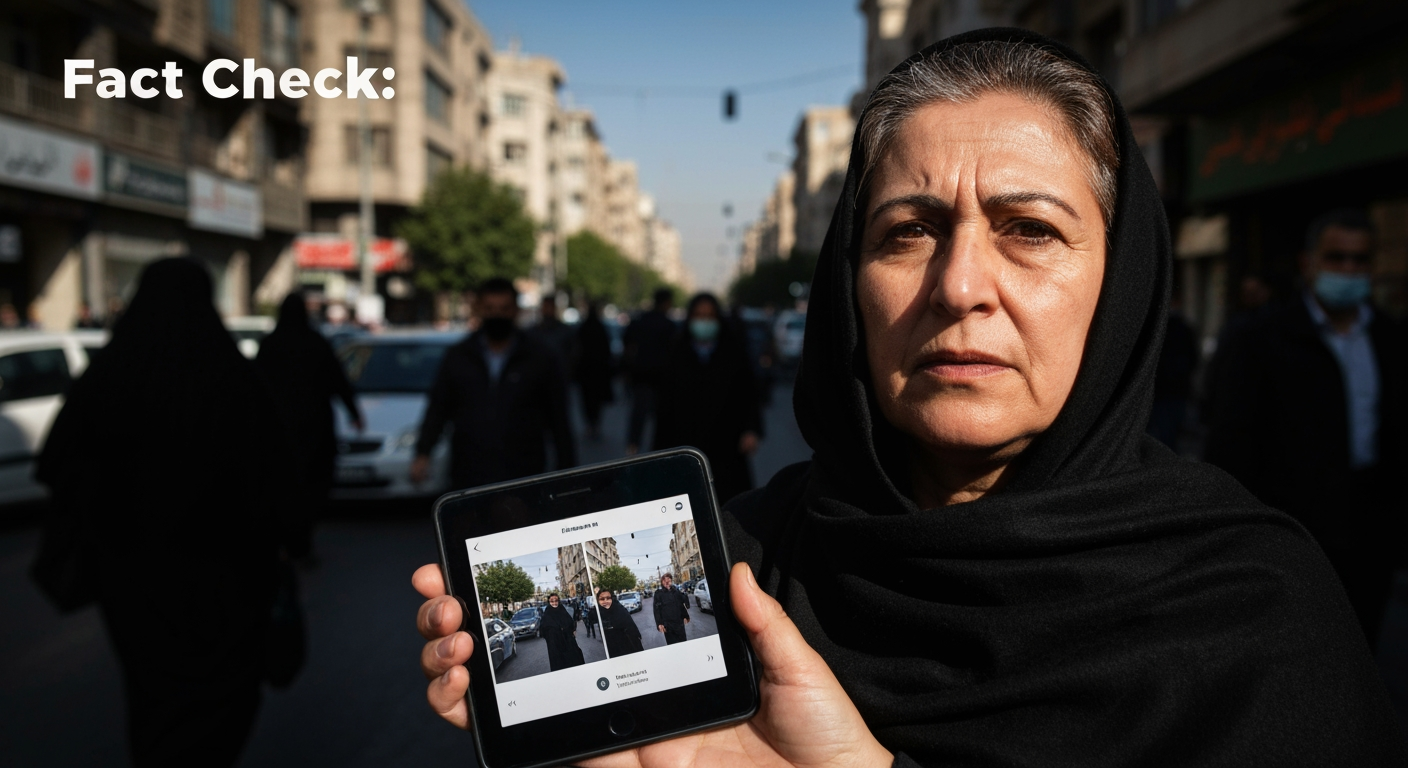

Digital Deception: AI Fakes and Old Videos Obscure Reality of Iran Protests

A pervasive wave of digital deception is profoundly distorting the public's understanding of the ongoing protests in Iran, as both artificial intelligence-generated content and old, repurposed videos flood social media platforms. This sophisticated misinformation campaign blurs the lines between reality and fabrication, making it increasingly challenging for both domestic and international audiences to ascertain the true nature, scale, and implications of the widespread unrest. The deliberate spread of misleading visuals poses a significant threat to journalistic integrity and public trust, transforming the digital sphere into a battleground where narratives are meticulously crafted and manipulated.

The Digital Fog of War: Repurposed Footage and AI Fabrication

The internet has become a crucial, yet treacherous, conduit for information regarding the Iran protests, with a noticeable proliferation of videos and images that are either fabricated by AI or deceptively re-contextualized old footage. This "digital fog of war" makes accurate reporting and public discernment exceedingly difficult. One common tactic involves circulating videos from past events or even different geographical locations, falsely presenting them as current scenes from Iran. For instance, a video depicting individuals hurling Molotov cocktails, widely shared with claims of showing Iranian demonstrations, was in fact filmed in Greece in November 2025. Similarly, footage from a 2022 protest in Sanandaj, showing the destruction of surveillance cameras, was misleadingly presented as recent events. Old military footage, some dating back eight years from Russia, has also been repurposed and attributed to recent conflicts involving Iran, further muddying the informational waters.

Adding another layer of complexity, generative artificial intelligence tools are increasingly being deployed to create entirely new, fabricated content that appears disturbingly realistic. Examples include AI-generated images of a "two-headed policeman" or women lighting cigarettes with an image of Ayatollah Khamenei, circulated to exaggerate police brutality or stir specific sentiments. During a period of heightened tensions involving Iran, AI-generated images purported to show the wreckage of a U.S. B2 bomber inside Iran's territory or destruction in Tel Aviv after missile strikes, both of which were later debunked. Another AI-generated video falsely depicted people protesting for an end to the Israel-Iran conflict, with tell-tale signs of manipulation like distorted body parts and disappearing flags. The ease of access to advanced AI tools means such fabricated content can go viral rapidly, often amassing millions of views before being identified as false.

Actors and Agendas: Who is Behind the Deception?

The propagation of misinformation surrounding the Iran protests is not attributable to a single actor but appears to be a multi-faceted effort involving various entities with differing agendas. Iranian authorities themselves face accusations of orchestrating coordinated disinformation campaigns to undermine nationwide protests. State-linked networks are alleged to spread fake news, manipulated videos, and misleading narratives to fracture public unity and suppress demands for political change. Tactics reportedly include altering protest videos with fabricated audio, such as chants calling for the restoration of the monarchy, to redirect narratives and portray the uprising as a monarchist movement rather than a broad rejection of authoritarian rule. The Iranian regime has a history of employing disinformation, using state media to control public opinion and discredit dissent by portraying protests as foreign-backed or separatist in nature. Regime officials have explicitly accused opponents of using AI to fabricate videos and images of anti-government chants and violence, aiming to discredit independent verification and suggest that much of the circulating footage is AI-generated by "enemy-affiliated outlets".

Conversely, external actors are also actively engaged in information warfare. Researchers at Citizen Lab reported on the "PRISONBREAK" campaign, a coordinated Israeli-backed network that leveraged dozens of social media accounts to push anti-government propaganda, including deepfakes and other AI-generated content, to Iranians. The goal of this campaign was to stoke unrest and encourage the overthrow of the Iranian government. These networks "routinely used" AI-generated imagery and video, mimicking real news outlets to spread false content during periods of real-world kinetic attacks. Beyond state-sponsored or politically motivated efforts, some individuals or groups may be driven by financial gain, as viral fake content can rapidly increase follower counts and engagement on social media platforms.

The Blurring of Truth and its Consequences

The pervasive spread of AI-generated fakes and out-of-context old videos carries severe consequences for the understanding and outcome of the Iran protests. This digital deception makes it incredibly difficult for individuals, journalists, and international observers to discern credible information, thereby undermining trust in reporting and potentially diluting the legitimate concerns of protesters. When misinformation is rampant, it can obscure the true scale of events, such as the reported thousands of deaths and arrests during crackdowns. The Iranian government has capitalized on this environment, with internet blackouts severely restricting access to information and enabling a more brutal crackdown, while simultaneously blaming external actors for fabricating content.

The constant barrage of misleading content risks desensitizing the public to authentic atrocities and genuine calls for change. If both sides of a conflict employ similar tactics, it creates an environment where all information is viewed with suspicion, potentially leading to apathy or further polarization. Experts warn that algorithmic amplification feeds emotionally charged falsehoods far faster than verified information, making it harder for truth to catch up. This manufactured confusion can serve to discredit legitimate opposition movements by portraying them as manipulated or inauthentic, or conversely, to destabilize a region by amplifying false narratives of chaos.

The Fact-Checkers' Battleground: Identifying and Debunking Fakes

In response to this escalating digital threat, fact-checkers, journalists, and technological solutions are locked in a continuous battle to identify and debunk fabricated content. Organizations like DW's fact-checking team and initiatives like FactSeeker are actively working to expose AI-generated videos and repurposed old footage, often using reverse image searches and forensic analysis to trace the origins of misleading visuals. Forensic tools that analyze metadata, audio spectrograms, and visual artifacts are becoming crucial for distinguishing real content from synthetic creations. Companies like GetReal are developing technologies to verify and authenticate digital content, acknowledging that this is an "arms race" against increasingly sophisticated generative AI tools.

However, the rapid advancement of AI technology means detection tools are struggling to keep pace. Experts note that it is becoming harder to detect fakes, and current detection techniques are starting to fail as generative AI imaging tools become more sophisticated, specifically designed to fool detectors. This ongoing challenge underscores the critical need for increased media literacy among the public. Individuals are urged to scrutinize sources, look for visual inconsistencies, and use reverse image searches before sharing content, especially during fast-evolving crises.

Navigating the Information Labyrinth

The digital landscape surrounding the Iran protests exemplifies a new era of information warfare, where AI-generated fakes and strategically repurposed old videos are potent tools for manipulation. This deliberate obfuscation of reality poses a profound challenge to journalistic integrity, public discourse, and the very understanding of critical global events. As the lines between authentic and artificial content become increasingly blurred, the responsibility falls not only on fact-checkers and technology developers but also on individual consumers of information to cultivate a critical eye. In an environment where misinformation spreads with unprecedented speed and sophistication, vigilance and a commitment to verified sources are paramount to navigating the complex labyrinth of digital deception and upholding the truth of events unfolding in Iran.

Related Articles

Global Press Freedom Under Siege as Authoritarianism Expands Its Reach

Press freedom worldwide is experiencing an unprecedented decline, a critical erosion that fundamentally jeopardizes democratic governance and an informed citizenry. This alarming trend is directly linked to a global...

Visa-Free Travel for Europeans Hinges on Controversial US Demand for Police Database Access

Brussels is currently grappling with a contentious demand from the United States that could redefine transatlantic data sharing and impact the visa-free travel of millions of European citizens. The US is pushing for...